Smart CAPA sounds straightforward on paper. Add better software, cleaner workflows, stronger dashboards, perhaps some AI support, and the CAPA system should become faster and more effective. In practice, implementation is much harder. CAPA sits at the intersection of quality, operations, data, people, and regulatory expectations, so “smart” CAPA usually fails when organisations treat it as a software project instead of a quality-system change. FDA’s CAPA training materials still centre the basics: analyze quality data, investigate problems, identify actions, and verify effectiveness. Those core expectations do not disappear just because the workflow is digital.

1. Weak investigations get digitised, not improved

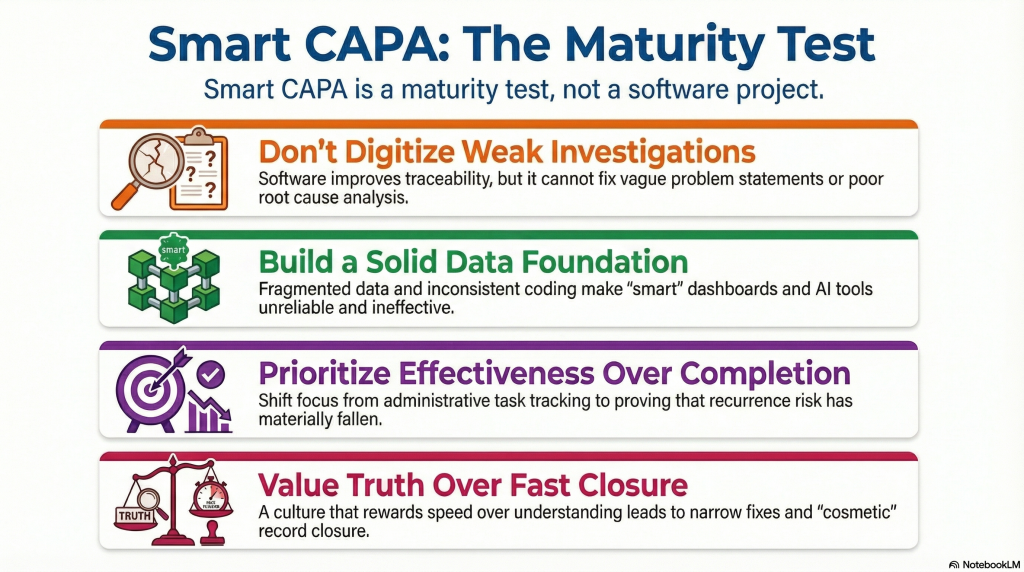

The first challenge is that many organisations already struggle with investigation quality before they introduce new CAPA tools. Root causes are often weak, problem statements are vague, and corrective actions default to retraining, reminders, or SOP edits because those actions are easy to assign and close. A digital platform can make this process more traceable, but it does not automatically make the thinking better. ISPE’s quality materials continue to emphasise root cause analysis and effective CAPA implementation as core capabilities, not optional extras.

2. Too much focus on workflow, not enough on learning

A second challenge is that CAPA systems are often implemented around routing, escalation, due dates, and status visibility. Those features matter, but they mainly improve administration. The real purpose of CAPA is organisational learning and recurrence prevention. McKinsey’s smart-quality work argues that CAPA should shift from a heavy process for every issue toward a framework that focuses attention where it creates the most value. That is difficult to implement because many companies are used to managing CAPA as a documentation burden rather than as a learning mechanism.

3. Poor data foundations limit “smart” capability

Smart CAPA depends on good data, and that is where many implementations struggle. Event coding may be inconsistent, issue descriptions may be weak, related records may not be linked, and trend categories may differ across sites or functions. In that environment, dashboards can look sophisticated while the underlying intelligence remains poor. A system cannot spot meaningful patterns if the source data is fragmented or unreliable. This becomes even more important when organisations want to add analytics or AI on top of CAPA records.

4. Cross-functional ownership is hard to achieve

CAPA rarely belongs to one function alone. Quality may manage the system, but operations, engineering, manufacturing, supply chain, validation, and leadership all influence whether investigations are good and actions are effective. That makes implementation difficult because “smart CAPA” often exposes organisational boundaries. Problems that appear inside one record may actually come from weak handoffs, unclear decision rights, or recurring issues across departments. If ownership stays fragmented, the technology may improve visibility without improving system-wide response. McKinsey’s biopharma operations work highlights that digital tools create value only when they improve how interconnected operations actually function.

5. Effectiveness checks are often too weak

One of the hardest parts of smart CAPA is not opening or routing records. It is proving that the action worked. Many organisations still measure completion better than effectiveness. They can show that training happened, an SOP was revised, or a workflow was signed off, but not that recurrence risk materially fell. This is a major implementation challenge because software naturally supports task tracking more easily than it supports judgement about real-world effectiveness. FDA’s CAPA expectations remain clear that actions should be verified or validated for effectiveness, not just completed administratively.

6. Regulatory expectations raise the bar

In MedTech especially, smart CAPA now sits inside a more current regulatory setting. FDA states that the revised Quality Management System Regulation became effective on February 2, 2026, and it has also issued guidance on computer software assurance for software used in production or the quality system, including software used for CAPA routing and tracking. That means companies are not just deploying a convenience tool. They are implementing part of a regulated quality environment, which raises expectations for governance, intended use, and confidence in the software.

7. Culture often rewards closure over truth

Another challenge is cultural. Many CAPA systems are implemented in environments where teams feel pressure to close records quickly. That creates predictable behaviour: narrow problem definitions, conservative investigations, local fixes, and premature closure. A smarter digital platform does not solve that by itself. In some cases, it can make the problem worse by increasing visibility of overdue items and driving even more emphasis on speed over understanding. Smart CAPA only works when leaders value truth, escalation, and evidence more than cosmetic closure. McKinsey’s smart-quality perspective supports this broader shift from compliance-heavy processing toward higher-value quality decision-making.

8. AI creates promise, but also new implementation risk

Many organisations now want AI-enhanced CAPA: automated triage, pattern detection, draft root-cause suggestions, or smarter trend analysis. The opportunity is real, especially as McKinsey points to gen AI improving quality and productivity in biopharma operations. But AI also makes implementation harder because poor data, weak governance, and shallow investigations become even riskier when scaled through automation. Smart CAPA with AI still needs strong human review, clear boundaries, and disciplined oversight. Otherwise, the organisation may produce faster CAPAs without producing better ones.

What organisations usually underestimate

The most underestimated challenge is this: smart CAPA is not mainly a technology upgrade. It is a maturity test.

It tests whether the organisation can:

- define problems clearly

- investigate systematically

- connect data across sources

- work across functions

- distinguish closure from effectiveness

- govern a regulated digital quality system properly

Without those capabilities, the software may still go live, but the CAPA system will not become truly smarter.

Conclusion

The challenges of implementing smart CAPA are not mainly about choosing the right platform. They are about investigation quality, data integrity, cross-functional ownership, effectiveness verification, regulatory discipline, and organisational culture. FDA’s current regulatory framework and software-assurance guidance make clear that digital tools can support CAPA, but they do not replace the fundamentals of quality management. The organisations that succeed with smart CAPA will be the ones that improve the thinking, governance, and learning around CAPA at the same time as they improve the technology.